A confession: I asked ChatGPT to help me write this.

I’ve been a writer for most of my life, and a teacher of writing for my entire adult life, but I still can’t stand the sight of a blank page (or screen). I have a hard time focusing my early, often disorganized, thoughts and I feel immense pressure to word everything perfectly the first time, even though my years of training have taught me that a messy draft on paper is better than a perfect one in my head.

Some lessons are easier to teach than to learn.

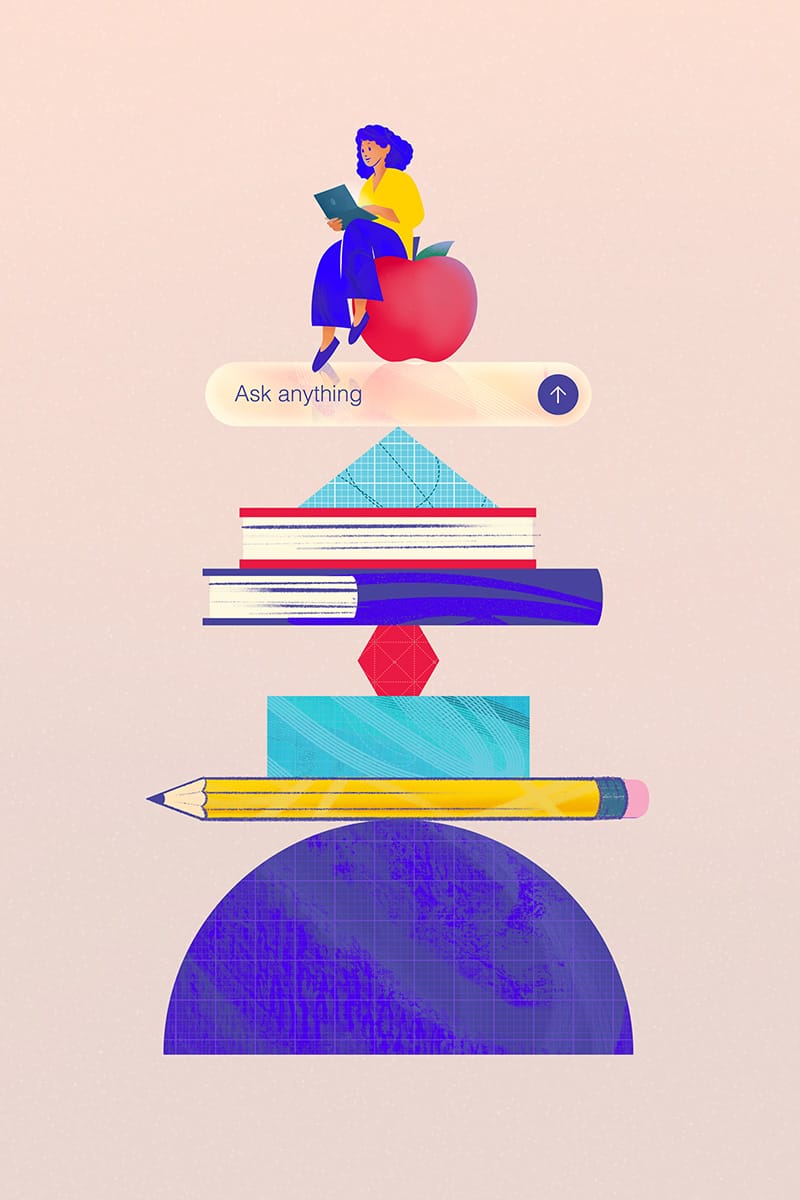

Enter generative AI: I prompt the tool based on my knowledge of my current writing situation, and I read and analyze the output it gives me before making decisions about my own text. Some of AI’s ideas or phrasing ring true, and others provide me with a point of departure. Along the way, I get a better sense of my own goals for writing and the kinds of choices I can make to deliver my message effectively.

More often than not, seeing what’s wrong with the AI output can help me hone in on what I actually want to say and how I want to say it.

It can also help my students: undergraduate and graduate students in the Department of Writing and Rhetoric who might take my Literacies of

Artificial Intelligence orRhetorics of AI and Big Data courses, but also the computer science, advertising and public relations, and health sciences majors who take my Professional Writing class.

They are all aware that AI is drastically changing the workforce they’re about to enter. But my courses help prepare them for this reality.

In Professional Writing, for instance, we talk about the flood of AI-generated resumes submitted to every job they might apply for (not to mention the AI tools used to screen all those resumes), as well as the potential expectation that they’ll use AI to write (or code) more efficiently or more uniformly once they’re on the job. We experiment with using AI to do some of that writing, and we have critical conversations about the good, the bad and the uncanny results of those experiments, from the made-up (so-called “hallucinated”) sources ChatGPT confidently provides, to its apparent love of the em dash, to its under-supported examples and surface level analysis.

We talk about what’s gained and lost when writing tasks are offloaded in part or wholesale to AI tools, and how to decide when we’re OK with that offloading and when we aren’t. In other words, we talk about what it means to be a writer in the age of AI.

As a teacher, I’m transparent with my own uses of AI and the things I think these tools do well, like helping me beta test instructions for activities and turning my class notes into slides.

I don’t outright ban the use of these tools in my classes because doing so both fails to prepare students for workplace writing and robs them of an opportunity to practice a new form of critical digital literacy.

Instead, we foreground discussion of the rhetorical situation (including our audience, genre and purpose) with each writing assignment. We have open conversations about the aspects of the assignment with which an AI tool might be helpful, like comparing and contrasting sources, or acting as a test reader with a specific persona (as well as aspects AI is unlikely to help with much at all, like describing the writer’s own experiences).

My hope is that as a result of these conversations, students leave my class better able to articulate what they bring to the table as writers in addition to considering where and how AI tools will fit into their writing processes.

More than anything, I want my students to feel like what they know and what they have to say as writers still matter, so maybe they can feel a little bit better when they sit down in front of a blank page.