A team of University of Central Florida researchers is tackling a problem that’s hindered the development of better hurricane-prediction models and cyberdefense systems for years.

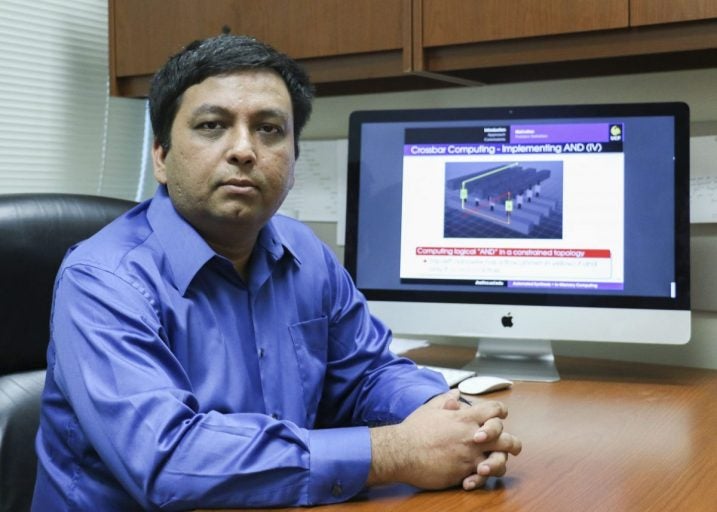

Sumit Jha, an associate professor in UCF’s College of Engineering and Computer Science, was recently awarded a $1 million National Science Foundation grant to make faster, better computer chips that can process big data in record time without overheating.

“Between the 1970s and say 2010, processing power of computers was going up every two to three years,” Jha said. “But then in the last decade, even if you have bought a new laptop or desktop, the processing speed has not gone up. This is a huge problem for society.”

One of the reasons for this is because in current computer designs the computer’s memory and processors have occupied separate physical space, with the gap between them known as the von Neumann barrier, named after John von Neumann, the scientist who was one of the first to describe this type of computer design.

This design is energy and time-intensive as data must move back and forth between the computer’s memory and processor, Jha said. And with larger and larger amounts of data collected in modern computer systems, this expense of time and energy is becoming greater. As this happens, the computer chip gets hotter and hotter.

“If you’re going to burn too much energy, the chip is going to burn,” Jha said.

Jha’s solution: Eliminate the gap between memory and processor by putting them on the same chip.

If this barrier can be eliminated, it would offer the ability to process large amounts of data more quickly. This could lead to better hurricane predictions and better security against cyberattacks, as well as energy-efficiency leaps in other computing technologies, such as self-driving cars, smart cameras and smart phones.

“So now you can actually start doing a whole different way of computing,” he said.

While other researchers have worked on combining memory and processors, Jha’s approach is novel in that his team’s hardware design can work with existing computer programming languages.

“I think one of the unique things about this project is that we do not want to change the software stack,” Jha said. “Let’s start with what people have and then do the hardware design required to run it on a new processor. If you were going to rewrite all of the existing software, it would be very, very expensive. The relatively simple Y2K bug is estimated to have cost a few hundred million dollars to fix. We cannot expect the society to rewrite all existing software.”

Jha’s team has run successful simulations using the approach. Now with the grant from NSF, the design will be fabricated and tested by researchers from SUNY Polytechnic Institute, who are joint recipients of the NSF grant. Work is expected to start in October.

Jha’s research team includes graduate students Arfeen Khalid, Amad Ul Hassen, Benjamin Shaia, Jim Pyrich, Dwaipayan Chakraborty and Sunny Raj. Recent UCF graduates Alvaro Velasquez and Julio Cesar Gutierrez were also instrumental in the research, Jha said.

Jha came to UCF in 2010 after earning his doctorate in computer science from Carnegie Mellon University.